China’s finalized rules for generative Artificial Intelligence (AI), effective from August 15, are considerably less restrictive than a draft version that had circulated previously. This shows that authorities, particularly China’s internet regulator, the Cyberspace Administration of China (CAC), have been receptive to public comments and industry concerns as countries race to develop the most powerful large language models (LLMs) – algorithms that can generate texts close to human language.

To understand the present and future of AI governance in China, it is important to consider that filtering undesirable information is a major – but not the only – lens through which Beijing thinks about algorithms. In keeping with an emerging trend in China’s digital governance, its new regulations aim to nudge the design and deployment of Chinese LLMs toward alignment with national interests.

The CAC has had a tough awakening that it needs to balance control and censorship with freedom for technological development. Compared to its previous regulatory action in the field of AI, this is one of the first times it has exercised its authority in a field that is subject to intense geopolitical competition. This put the CAC’s security-first approach in open contradiction with China’s innovation and geopolitical ambitions.

China’s AI industry, which the government considers of immense economic and strategic significance, has entered a period of intense uncertainty. U.S. export control measures from October 2022 have complicated Chinese firms’ access to advanced chips needed to train AI models to create text, specifically some GPU models sold by American chip design giant Nvidia.

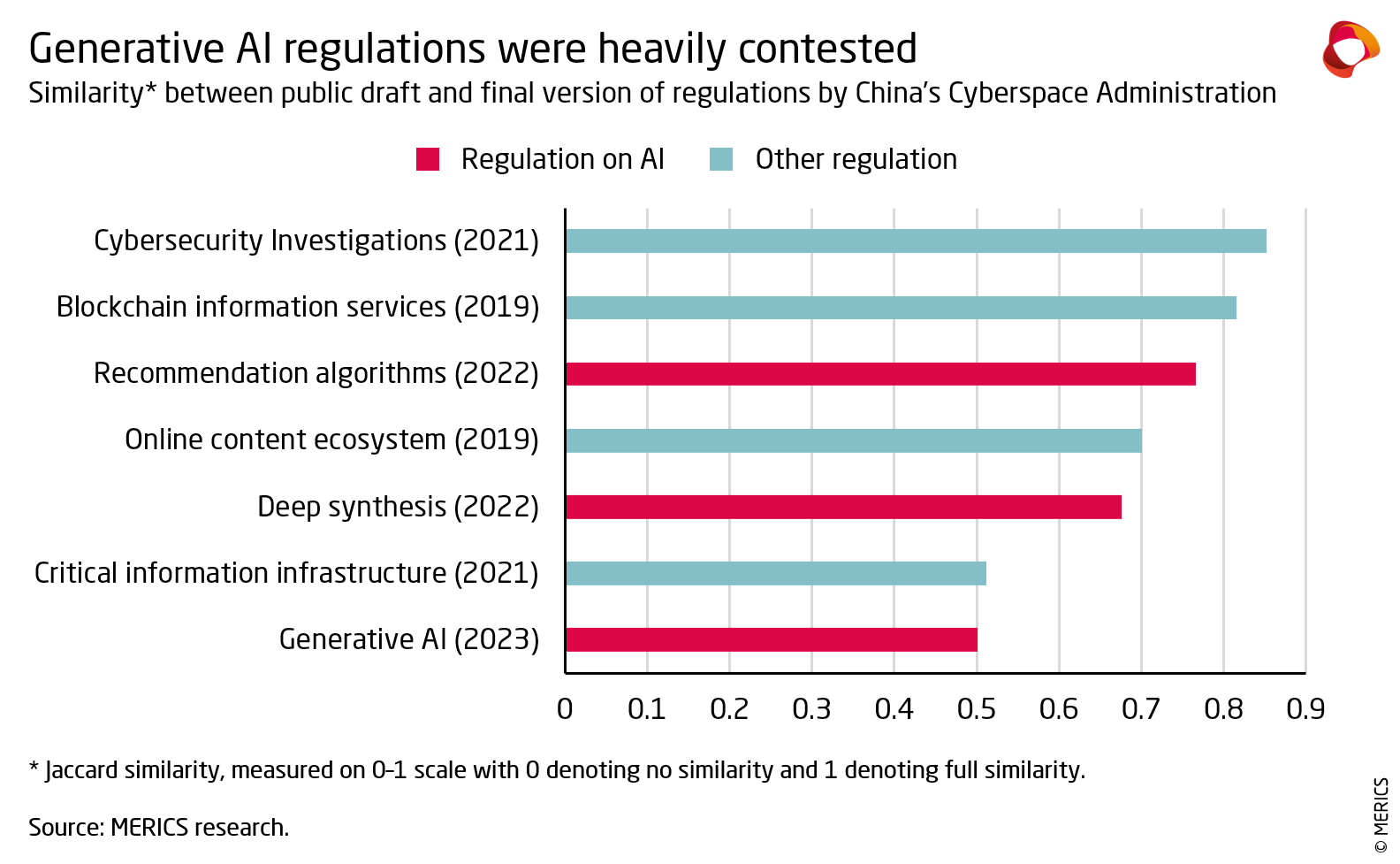

As a result, the new rules have been among the most significantly amended regulations in the history of the CAC. Most crucially, these regulations now exempt any non-public-facing applications – such as LLMs for medical diagnosis or industrial process automation – from scrutiny, including all research and development activities.

Specific requirements have also been softened. Domestic AI experts had called for major revisions to clauses requiring companies to guarantee that their training data was sufficiently accurate and true. Such guarantees would be an enormous task, given the billions of training data used for large language models. Now, these clauses have been watered down to require only “sufficient measures” as a guarantee.

Ever since the publication of the New Generation Artificial Intelligence Development Plan in 2017, the party-state, with Xi Jinping at its helm, has signaled a firm intention to both tame and harness those AI algorithms that have the power to shape public opinion online. One way it has done so is through regulatory actions, most notably landmark rules on recommender systems that represent one of the most pervasive forms of AI today. In the Chinese Communist Party’s vision, algorithms that push content to users should not be used to enrich powerful corporations, but instead to promote officially endorsed values. For example, the CAC wants social media platforms to use algorithmic recommendations to refute rumors and promote “positive energy.”

Yet, it would be a mistake to see censorship as the only driver. China’s tech rectification campaign has illustrated that authorities are not interested in sustaining platforms’ business models if addiction, financial risk, and socioeconomic scandals are the imminent costs to society. Regulators are tackling societal concerns and possible harms from risks like AI-generated drug prescriptions or scams. Some regulations contain specific clauses to prevent digital addiction of minors while others require special accessibility modes to support the elderly, among other things.

In 2000, U.S. President Bill Clinton famously remarked that China’s attempt at developing a censored internet was “sort of like trying to nail Jell-O to the wall”; Chinese internet giants proceeded to create the world’s most vibrant digital economy, their platforms complying and even assisting with Beijing’s censorship requirements. The generative AI rules similarly leave room for flexibility to support China’s industry in taking on OpenAI’s ChatGPT, even as they expect companies to overcome the “political alignment problem” – figure out technical ways to ensure that as little generated content as possible violates the Chinese Communist Party’s strict information controls. They seek to square the circle between security and development objectives around new technology.

While the CAC will remain wary of netizen-facing chatbots, China’s central and local governments are supporting model training that embraces generative AI applications in areas that matter for Xi Jinping’s much touted “real economy,” from accelerating the discovery of new drugs and streamlining medical communications to upgrading manufacturing. In July, Huawei launched Pangu 3.0, a set of pre-trained models that tackle industrial pain points, like faults in rail freight carriages. Generative AI is welcomed even in law, to help courts automate document summaries and filing. This is why the new regulations purposely exempt them from their purview.

Overall, Beijing’s regulatory actions on generative AI do not appear to pose a risk of killing innovation and chilling private tech firms. Even as the CAC is, by its very mandate, primarily interested in limiting the influence of ChatGPT-like products on public opinion, it had to back down and soften the requirements for LLM developers. China’s AI governance may continue to surprise observers as the government tries to strike a balance between control and development.